Archive: January, 2023

The creative urge is... hey, focus!

posted by Jeff | Tuesday, January 31, 2023, 12:03 AM | comments: 0I am feeling pretty energized lately about doing creative stuff. I'm not entirely sure what's driving that, and honestly I'm not sure it matters. I mean, I'm a little curious about the origin, if only to bottle it and use it when I'm not feeling it.

It wasn't that long ago that I was obsessed about lighting, and I still am. But I'm also kind of obsessed about how to replace the cabinets in our butler's pantry with some shelves that have embedded lights in them. I remembered last weekend how much I love photography. And of course, I want to shoot video so bad that I can taste it, and I have plans for that where I need to make a schedule. Oh, and I have some software coding projects in mind, too.

This is the most intense these urges have been since possibly my late 20's. What's hard is that I can't seem to focus on any one of the things. I am feeling the ADHD with self-awareness that I haven't had before. It's said that ADHD can be a superpower when you can settle into a hyperfocus mode, but it seems hard to choose that mode. I just find myself in it when doing certain things like writing code, or playing a LEGO video game. Task switching is generally difficult for people with ADHD, but I find that when I break things down into little chunks, it actually makes multitasking possible. You should see my first hour of work... I'm checking work and personal email, knocking out that Wordle, catching up on Slack messages, scanning my Facebook memories, reviewing the sprint board for my team... I'm doing all of that. So how do I apply that to the creative endeavors that take a lot longer?

I know now that so much of the struggle that I've experienced has come from fighting myself. I didn't know I had ADHD in school and college, but its effects caused a lot of self-loathing. I thought my inability to focus and finish things was a personality flaw, not brain chemistry. Now I know to lean into it, and I feel like it's helping me professionally. The challenge now is learning to apply it to creative things.

A concrete example of this is to look at all of those things that I want to do, choose one, and look for a smaller, interim outcome. I can get one of those video things scheduled, then I can move on to some prototyping for the code thing. For the shelving, I can map out what I would need to do it. If I can't focus on one thing, focus on all the things in short bursts. Again, it's what I do at work. What I hope might happen is that one of these things drift into hyperfocus, and then the outcomes are bigger.

Trying something isn't a lifelong commitment

posted by Jeff | Saturday, January 28, 2023, 5:44 PM | comments: 0Back in August I spontaneously (and possibly under the influence) decided to buy some used electronic drums. I had been recreationally thinking about it for years. The opportunity presented itself on the Facebook from someone in the neighborhood, so an hour later, I had electronic drums in my house.

At first, I spent about 15 minutes a day behind them, mostly every day. Over the time since, the interval decreased, but my ability to make noise with them increased. I was entirely learning from YouTube videos. I reached a point where I could pretty quickly act on variations, and started to figure out how to put the occasional fill in there. In my ears at least, it sounded a lot like drumming, if not particularly complex in nature. I didn't really play with anything, so I probably would not have been useful to a band.

Curiosity satisfied, I finally packed them back up into their boxes. The truth is, I understand them well enough to know that if I'm ever going to advance, I will have to put in a lot of time. I don't feel like I have that time, or that the reward and satisfaction is high enough to commit.

That's OK.

Call it another midlife epiphany, but I think I understand now that just because you try something new doesn't mean that you have to commit to it forever. That seems obvious when you say it out loud, but I never really realized it. I suspect that part of my thinking is that I simply have too many things that I start and don't finish. While true, if I take inventory of the things that I have finished in the last year, I think I am pretty entitled to not finish stuff.

So try things, you don't have to keep doing them.

A genuine teenage grounding

posted by Jeff | Friday, January 27, 2023, 10:57 PM | comments: 0If there's a positive to having a kid with an IEP and a mom who volunteers at school, it's that we're never very far from what goes on with Simon at school. To that end, today we learned that Simon got busted for flipping off a female classmate today. The circumstances are sketchy, but it doesn't matter. It's obviously not something that he should do. Ever.

We've certainly had plenty of pre-teen (he's not 13 yet) combat around here, but it has never reached a "you're grounded" point. That's kind of weird given the increasingly frequent arguments about literally everything. But it's one thing to be hostile at home, and quite another at school.

So tonight was, in the strangest way, better because he couldn't just get lost in online gaming for the night. Quite the opposite, the deal was that he had to work his way out of groundedness with a list of chores. There was a little push back at first, but it mutated to doing chores pretty quickly. While this was going on, we were playing tunes, cooking food (and making cocktails) while doing other things around the house. The surprising part was that, at one point, I recall him saying, "I should get grounded more often!"

Diana even pointed out, that it was nice to just have him in the room with us, talking about music or movies or about life. She's totally right about that. I don't like withholding games from him, because it's his safe, unwinding place. But sometimes he does act like an addict toward them, which strikes deep fear for me given the history of addiction in my family.

It does make me wonder if we just have to designate one night a week to no-screen chore nights. It might not be the worst thing for him.

Not terrible pandemic memories

posted by Jeff | Friday, January 27, 2023, 7:33 PM | comments: 0If you read my nonsense here, then you know that I do annual playlists. My 2020 playlist is playing right now, as we mull around the kitchen eating, cleaning, browsing and doing vision therapy (at least, Simon is). Here's the weird thing... the memories around these songs aren't terrible.

The pandemic meant that we necessarily spent a fair amount of time not around others. It's frustrating that more than a million Americans died anyway, but we did our best to do our part. On Friday nights, we would typically watch Suzy & Alex, a duo we saw on a cruise a year prior, doing live streams performing from the UK. The time difference was perfect because they started before I even ended work. Then we would listen to our playlist, with the windows all open, making beverages, dinner and generally ending with a walk through the neighborhood.

Yes, the lack of in-person contact with others sucked. But despite this, it seems like we over compensated for the isolation with more in-house activity, and that wasn't terrible.

The coronavirus pandemic was a pretty terrible, global event. In retrospect, one of the frustrating things is that we didn't learn much from it. Our healthcare system is as terrible as ever, and more expensive than ever, we still lost a million plus citizens in our country alone, and we're doing nothing to prepare for the next pandemic. But despite all of that, I feel like we had a great opportunity to really take a hard look at our lives, how we were living them, and evaluate what was really important.

.NET development on Mac is real (if a little tricky)

posted by Jeff | Wednesday, January 25, 2023, 9:30 PM | comments: 0Yesterday I mentioned how enamored I was with Apple's new (last year) generation of self-made silicon laptops, but the lingering question in my mind was, could I completely get away with not having to run Windows in a VM? So I borrowed an M1-based Mac and gave it a shot. The good news is that it's possible, though it took me about four or five hours of messing around to make it roughly equivalent to the Windows experience.

I've tried to go down this road before, probably in the .NET Core 3.1 days. I got pretty close, and I remember even making a blog post showing I could do it, but the experience wasn't great. Part of the question is whether or not Visual Studio for Mac is a first-class experience, as it has evolved from its Xamarin days. I'm not that interested in answering that question, because on the Windows side, Visual Studio is great but still feels a little incomplete without ReSharper. Or maybe I'm just so dependent on those refactoring bits that I can't live without it. The limitation in those days was working with Azure Functions, and, spoiler alert, that's still the hard part. To be fair, they barely worked right on Windows when a new .NET came out. I'm also not interested in trying to make VS Code work with a complex solution, because while I read it might be possible, the mix of plugins and CLI stuff is too complicated.

To make this as real-world as possible, I of course wanted to be able to work on POP Forums in an unrestricted way. That app has Azure Functions, a Razor Class Library (RCL), TypeScript, embedded and built JS and CSS, Azure Storage, ElasticSearch and Redis, plus SQL Server. Lots of tools, lots of stuff to potentially go wrong!

This time, I started with JetBrain's Rider, the variation on IntelliJ for .NET that appears to share some code with ReSharper. I've been Rider-curious for a long time, in no small part because it's crazy fast compared to Visual Studio, especially Visual Studio with ReSharper. Things are different, but they are for the most part all there. The learning curve is not that big, and it's mostly an issue of looking around and learning some different keyboard shortcuts, though most are the same.

Next, the supporting stuff is easy enough to spin up in Docker containers. Specifically, I use containers for SQL Server, Redis, ElasticSearch and Azureite. The last one is to simulate Azure Storage, and while Rider has some stuff to run it on Node, I find it's easier to just run the container. Those parts are easy enough.

Next was figuring out how to make an old Gulp task run, as it copies a bunch of npm packages and minifies and stuff. It does so in the aforementioned RCL so it can be packed away in a Nuget package. You need Node.js installed, just as you do on Windows, but beyond that, the documentation shows simply how to make the Gulp part of your build.

So about the Azure Functions. As fantastic a resource as these are in Azure, they don't feel coherent with .NET or Azure. They break conventions, have had weird dependencies over the years, and used to lag behind .NET releases. It's better, but not great. They wouldn't even build out of the box on an ARM Mac. The first part, and it was hard to find this, is that you need to install the tooling via Homebrew, as called out on the page that took me hours to find. Prior to this, it wouldn't build because it was looking for some .NET 3.1 stuff, which doesn't even run natively on ARM Macs. I then had to point Rider at the installed new tools in its settings.

Next, the functions couldn't connect with SQL Server, because using a trusted connection string won't work since it's not Windows. (I have this for simplicity.) On the web app, it was easy enough to make a appsettings.development.json file, .gitignore it, and override the connection string with an actual user ID and password. But in the functions app, no, of course it's not the easy. That team wants to force you into stashing your settings in a file called local.settings.json or else, in order to avoid it being using in actual Azure. That means the environmental settings files are a no-go. You can't specify any arbitrary name, which you'll never know because that's in the source you'll never look at. That's super lame, because a lot of teams use these variation files not for non-local environments, but so they can experiment locally without risking a commit of their config values to source control. So I had to set up an environment variable in Rider, which I guess is fine, but only because I don't need to change it often.

At this point, everything builds, everything runs, and at it's just a matter of seeing if the coding endeavor works the way you're used to in Windows Visual Studio. The big concern I had was the combination of hot reload and what's called browser link on Windows. There are settings for "Hot Reload" in Rider but it never worked for me. There's a plugin called .NET Watch Run Configuration, and it's like magic. dotnet watch is pretty straight forward, but for reasons I can't explain it watches everything, including CSS and TypeScript, even in the RCL, and it magically reloads the page in the browser, unprompted. I assume it's talking directly to Chrome. I don't even need to save the file, it's already happening when I change a Razor file in the RCL, and it trickles down into the web app. I haven't even been able to get this working consistently on every machine using Windows Visual Studio. I'm beyond excited about this.

The rest is just exploration. There's a structure window that replaces the class member dropdowns in VS, and it also works in HTML and CSS files. If you use the right keyboard shortcut profile, many things are the same, like Ctrl-T-L to run all tests, F2 to rename (good thing the touch bar is gone!), Option-Enter then arrows to introduce a private field, or Ctrl-N to get to anything. Things like extract interface are still in a menu, while others like Generate -> Constructor don't have a keyboard shortcut in every profile. I really struggle between using the "ReSharper" and "ReSharper (Mac)" schemes, the latter of which uses Command instead of Ctrl for most things. There are little trade-offs, but you can of course map anything to anything.

Once I figured all of that out though, I was kind of surprised at how fast and robust everything was. It's not even close racing against my Surface Laptop 4. .NET on Mac is legit, and free of Windows.

AI won't be good for search or independent publishers

posted by Jeff | Wednesday, January 25, 2023, 7:11 PM | comments: 0There's a lot of talk right now about the gold rush around artificial intelligence, and how Google is starting to panic while Microsoft is planning to use ChatGPT in its products. On the surface, this sounds great, but let's remember that time when Facebook's chat bot went racist in just a weekend. The problem with AI is that the scientists haven't figured out how to code critical thinking or morality. Certainly it's bad for the Internet, but it's even worse for the independent publishers who put things on the Internet. Let me explain.

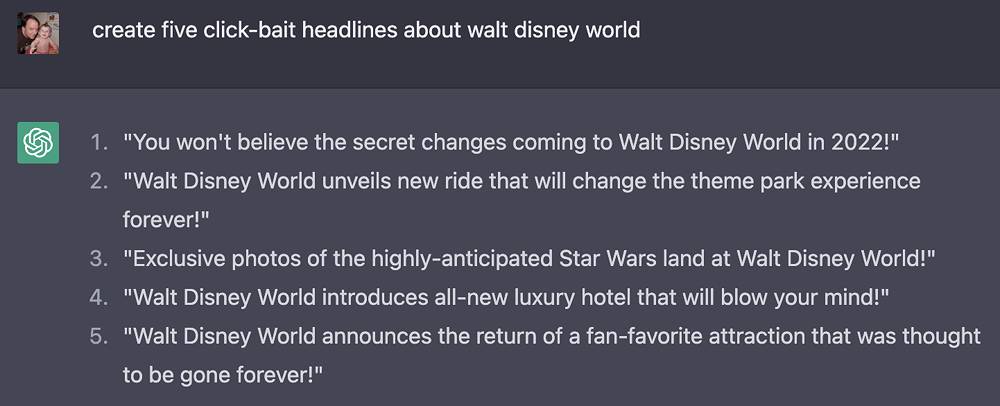

If you think AI will improve search, consider how terrible the current algorithms are. Not only do they tend to reinforce what you already believe by serving you what you want, but they do a horrible job at filtering out click-bait nonsense. The average news suggestions read like, "10 things that make this thing quiver at the sight of a phenomenon you won't believe." In theme park circles, an area I follow, you get things like, "This theme park is shutting down this ride indefinitely," instead of, "Disney is closing Splash Mountain to re-theme it to Princess and The Frog." The algorithms reward bullshit.

It stands to reason that if AI is embedded in search, this will only get worse. It's not just the critical thinking, it's that the machines learn from us, and there's a lot of really terrible, toxic things on the Internets. How does it know the difference?

But I think the worse thing is that if the Internet becomes a training data set, based in part on the work of the hundreds of thousands of independent publishers, their work is just synthesized into answers for every question, without retribution. Google is already walking this line, giving a few sentences scraped from sites to form answers. The publishers don't get the traffic, which means they don't even get the crappy ad revenue. When they can't afford to stay on the air, it disappears, leaving only the worse data set: social media.

I know, that all sounds pretty bleak. I'm not sure what we do about it.

Apple won me back on laptops

posted by Jeff | Tuesday, January 24, 2023, 9:17 PM | comments: 0About five years ago, I broke a dozen-year streak of Mac laptops with a Windows laptop. With Mac and Windows both using Intel processors, the hardware inside was largely equivalent, depending on what you bought. I've mostly liked the HP I had, and the Surface Laptop 4 since (I also still have a Surface Pro tablet/PC from eight years ago). Windows 11 is actually pretty solid, continuing an evolution that's been going on since 2009-ish. Still a lot of quirks, but it's generally reliable.

I didn't go to Windows as much as I went away from Mac. By 2018, Apple took some unfortunate turns with the hardware. They stopped putting useful ports on the things, leading to dongle hell, they ditched mag-safe power, and the biggest sin, leading to class action lawsuits, that horrible keyboard and touch bar. That, and the prices were not even remotely competitive. My last Mac was a 2014 13" model, and I know a lot of people held on to machines from that year, for the keyboard alone.

Fast forward to late 2020, Apple releases their first machine based on their own custom CPU. The next year they spread around the processors, including faster ones, across the product line, and people went ape shit. Benchmarks blew minds, and it was especially great for creator types who work with video and other media. The new machines reversed all the poor choices I mentioned before, and they introduced a 16" version. I got one for work, and I couldn't believe how great it was. This year's generation is incremental in improvements, but still high end.

Mind you, they're still pretty expensive. It's relative, I suppose, as I bought a $2,500 Sony in 2000 ($4,200 on today's dollars!), to work with DV, but they're still steep relative to Windows laptops. Sort of. The only real equivalents are gaming laptops, which are probably the closest things in terms of performance, and they're about as expensive. For normal people, I will say that the new MacBook Airs are a pretty good deal. But even then, we got Diana a Surface Laptop Go 2 for $600 that feels premium-ish and suits her well. It depends on what you do. I haven't worked with video on a laptop since HD days, and even then it was a struggle and rendering was crazy slow.

But the thing that I love the most about these new things is the battery life. These ARM processors are way more efficient, and Windows on ARM has a long way to go. Not only is battery life crazy good, but they don't get so damn hot. People seem to be getting 10 hours on a charge at the low end. I get 6 to almost 8 hours on my Surface Laptop, depending on what I'm doing and how bright the screen is. That seems to be right around a good spot, but I've definitely hit points where I had to plug in because I was on and off of it over the course of a day and it ran out toward the end.

MacOS hasn't changed much, but it's a solid operating system. Fortunately they haven't messed with it much just to mess with it. It's not like iOS, which is a trainwreck of horrible settings UI and absurd gestures to do anything. I can't stand the iPad Mini we bought last year, and I only use it for testing.

Am I going to buy one of these new things? I'm not really planning on it, but never say never. If discounts go deep on the previous generation, that would help. Whenever I buy a new one, honestly part of it is that I miss having a bigger screen. I've had small and light now for a long time, but that 17" I had a decade ago was awesome, and that was before high resolution screens.

Welcome back, Apple. Sorry, Jony Ive, your portless design was crappy.

These are dark times for online ad revenue

posted by Jeff | Sunday, January 22, 2023, 7:48 PM | comments: 0This is going to be the second month in a row that ad revenue from my sites will not cover expenses. It's not a traffic problem either, because while CoasterBuzz is down a little, PointBuzz is way up by about 25% compared to last year. The issue is that what I'm getting for those page views is about half of what it was last year.

My hard costs have come way down over the years, thanks in part to the cloud resources I'm using (in my case, Azure). My total hard costs monthly are about $250, and that includes a buttload of redundancy and pretty spectacular performance. It's a little more than I paid for the last dedicated server I ever had in 2014-ish, but that included no redundancy and pretty mediocre performance. And of course, in the early days, I was shelling out over a grand per month for a dedicated connection to my house.

There are soft costs, too, like the annual LLC fee to the state, an accountant fee for my taxes, monthly fees for software (mostly Adobe, though I've been able to keep that down to $30), domain name fees and some other things I'm not thinking of. Half of the PointBuzz revenue goes to my long-time partner Walt. Spread out over a year, it probably averages between $300 and $350. That sounds like a lot, but it's a small miracle to be able to pay that little for what it gets me.

Not sure exactly what I can do about this. If you've been to any site lately, especially on mobile, you know that they're loaded with video ads and all kinds of bullshit. I don't want to have that on my sites. If I were particularly wealthy, I'd just eat the cost and not worry about it, but this makes me uncomfortable. November to January are always slower months, but I'm not seeing any signs of improvement.

I hope it gets better, but hope is not a strategy. I hate this world of the ad duopoly and platforms running everything. That's not what we signed up for on the Internet.

Academic freedom is under attack from the right and the left

posted by Jeff | Thursday, January 19, 2023, 7:40 PM | comments: 0When I was a student at Ashland University, the university housed (and still houses) a political non-profit organization in the library penthouse. I say "quasi" because university officers sit on its board, and two of its board members sit on the university board. It is a rightward, conservative organization that at the time lacked diversity and seemed to have an outsized influence on the political science program. Worse yet, they brought is some big deal speakers while I was there that the general student population was not invited to see, specifically Margaret Thatcher and Dan Quayle. Admittedly, the latter never seemed interesting, but he was a vice president regardless. As the resident liberal newspaper columnist, I called them out pretty frequently as being one-sided and limiting legitimate discourse, and the organization never responded directly.

On the flip side, we had a course that I think was required, called "Race and Ethnic Relations." The subject matter was what it sounds like, and it was taught by a veteran who spent time on the front lines of the civil rights movement in the 60's. Despite growing up until 14 in an inner city environment that consisted mostly of minorities, I don't think I really appreciated the struggles of that era until I took that course. It was the first time I had ever heard the term "institutional bias," and that was in 1993. It was one of the best classes I took in college, with a lot of very spirited discussions. I looked forward to it because I knew I would gain historical perspective that I lacked.

One university, two sides of academic freedom. On one side, discourse was stifled and limited, and on the other, it was unrestricted and sometimes made people uncomfortable. I would argue that the latter is what learning is about, but we seem to suck at it as adults.

Academic freedom is currently under attack from a number of vectors, and they're not simply right or left. Obviously the biggest effort from the right can be found right here in Florida, where a law essentially says you can't teach about things that might make white hetero people feel bad. A judge has already blocked it from higher education, another from employer enforcement, and it's still working through the courts for K-12. It obviously violates the First and Fourteenth Amendments. Now the state wants to block AP African American Studies from being taught, without any explanation of why the class, which is still being piloted and refined by the College Board, violates the law.

But it's not strictly a phenomenon from fascist right-wing politicians. Sometimes it comes from with in institutions that are generally regarded as left leaning. A former director of Human Rights Watch was denied a fellowship at Harvard, allegedly because of things he said that were critical of Israel. The man is Jewish and his father fled the Nazis, so the suggestion that he is antisemitic because he's critical of Israeli policy on human rights seems a little over the top, especially in an academic setting.

That's not the only story in this category recently. An art professor from a small school was essentially fired for showing, with warnings about the sensitivity around it, an image of the Prophet Muhammad, which is prohibited by Islam. The university responded to the complaint of a student that found it deeply offensive, and the school quickly labeled the professor as Islamophobic. I can't possibly relate to the student, obviously, but I thought that the Council on American-Islamic Relations took a thoughtful position on the subject:

Although we strongly discourage showing visual depictions of the Prophet, professors who analyze ancient paintings for an academic purpose are not the same as Islamophobes who show such images to cause offense.

In all three of these academic situations, intent matters. If we make the leap in the presence of discourse straight to hate, we fail to understand each other and the basic anthropology that put us where we are. The gulf between legitimate discourse and demagoguery should at least be apparent in an academic setting. American society would benefit from it too. To enable discourse isn't granting legitimacy to morally inequivalent positions. And I don't mean that in a Tucker Carlson "I'm just asking questions" bullshit way.

We all have a right to avoid things that interfere with our well-being. You'll get no argument from me about that. But discomfort over academic discourse does not share intent with bigotry.

Creative anxiety

posted by Jeff | Tuesday, January 17, 2023, 10:06 PM | comments: 0I have made a ton of stuff in the last few years, and regardless of how much of it is seen or used, I'm deeply satisfied by it. I posted another LEGO time-lapse on my silly YouTube channel (help me out and subscribe) that YouTube will make money off of for free, but that's fine. Most of the stuff that I make is mostly for me first, and if others find a benefit, that's a bonus. The scope doesn't matter. It's also important to make the distinction that this stuff I make is largely a solitary effort. No one else is really involved.

Now I'm endeavoring to make something that will involve other people, take a significant amount of time, probably over the next year, and involve a whole lot of people and travel. I simultaneously feel an intense focus to make it happen and equally intense anxiety that makes me want to just stay home and not do it. That's the reason that I haven't really written about it yet. If I don't write about it, I don't have to admit that I didn't do it. It's like creative cowardice, if that's a thing.

I'm fairly certain that the anxiety is rooted in the same thing that kept me from doing my silly short videos at first or writing any kind of fictional screenplay. I'm just deeply afraid that it's going to suck. I've somehow forgotten how to allow things to suck as a means of learning. When it comes to art, I want to have that story where an unknown creates something marvelous and everyone loves it. I'm skipping the part where it's for me and making it about others.

At least I understand the source of the anxiety, but it sure is hard to talk myself out of it. We'll see where I am with it in a few weeks.

Working for outcomes, not hours

posted by Jeff | Saturday, January 14, 2023, 11:34 AM | comments: 0There is a subset of executives that are making moves to put people doing desk jobs back in offices. They usually cite some reasoning that people collaborate better in person. Research tends to show that people are more productive, less stressed and less likely to bail when they work remote. There are other reasons that I suspect they really want butts in seats. It's likely some combination of having real estate that they're already paying for and a lack of trust that people are doing the work.

Salaried, white collar desk jobs are still in a strange place, where some believe that they're paying you for your time instead of your outcomes. A CEO once asked me how I know if my software engineers are working when they're remote, and I asked him how he knew his sales folk were working (his background was sales). He said, unsurprisingly, that they brought in revenue. I simply told him, software engineers deliver software. He didn't have a good response for that.

The truth is that most non-hourly jobs have some level of ebb and flow when it comes to the work load. I absolutely have weeks where I really only have a good 30 hours of things to do, but I also have weeks where I'm in it for 50 hours. It shouldn't matter. I'm not accountable to time spent, I'm accountable for outcomes. My reviews aren't about time spent, they're about outcomes.

Think about all of the jobs that are obviously outcome driven. Teachers, actors, lawn mowers, Doordash drivers... you aren't monitoring the time they take to do the job. (Sidebar: this works in the negative for teachers, who are paid too little given the time spent.)

The value of professionals is in the things that they can do, not how much time it takes to do them. They aren't doing hourly work that results in more output with time.

Pick a hobby!

posted by Jeff | Friday, January 13, 2023, 10:30 AM | comments: 0What a difference a year makes. A year ago, I couldn't get off the couch, but now I want to do all of the things. I'm following through on doing stuff that I've wanted to do for a long time but didn't have the energy for. Now I have a problem of plenty.

My office is getting crowded. Right now, I have video gear all over the place as I take inventory of what I have, what I need and such, for a project I'm doing (more later). If that weren't enough, I still have the electronic drums set up. I also still have my lights in a case and fog machine in the corner, and I'm not sure where to put those. I have a vintage computer on the floor, and a box of stuff to go with it, that I haven't fired up yet. The Lego Eiffel Tower is sitting on my network cabinet, and the box for it is in another corner. I can barely get to the fridge in the Pac-Man machine. Oh, and I pulled out my old cassette deck, and if I can find my tape stash, I want to show Simon what it was like to make mix tapes back in the day, maybe make a video about it.

I suppose this is what ADHD looks like, as I hop from one thing to the next and back again. The stereotypical hyperfocus drops on one thing or another for awhile, which is good because it results in finishing things. Right now though, I have to focus on the video project, for which there is some preproduction to get on. The drums, I enjoy spending 15 minutes at a time on, and there are even some songs that I would like to try playing along to, but it has fallen down the priority list. If I put the drums away, they'll probably never come back out, and that feels icky.

These are all good problems to have, certainly. I'm eager to get up in the morning because of it all, and I go to bed late for the same reason. (Which might be why I'm tired!) Unfortunately I do seem to apply almost a work-like standard to hobbies, where I must reach certain objectives to validate these endeavors. I sometimes feel like the artist in a room full of paint, a mess everywhere, but not a single completed painting. At that point, are you even an artist? I have to be zen with the chaos, because I'm having fun and I shouldn't make it feel like work. The one exception right now is the video thing, because that does involve other people's time.

One of the things that I've noticed is my head leaps ahead in a lot of things that I'm interested in. That's a doubled edged sword. On one hand, it makes it difficult to do the thing in the moment, and do it well. But sometimes, especially in video stuff, I'm editing in my head as I shoot, which makes it easier to make sure I'm getting what I need. Sometimes I get that while writing code, though it can make it harder to start. Understanding things like this makes me want to lean into those activities.

I have so many activities.

The mental fatigue of autism

posted by Jeff | Saturday, January 7, 2023, 3:12 PM | comments: 0I find myself mentally tired a lot. As in, I get to Friday night and I don't even have the energy to watch mindless TV. I can't relate to people who always have to be doing something. I am in fact at peace with doing literally nothing other than occupying space.

When I ask others if they ever get like this, almost everyone says they do not. With me, I get it more in the winter, so there's an environmental variable. I also feel this way when I have a lot of consistent human interaction. This may explain why I experience it more in this, the later part of my career, where I'm not heads-down making stuff and spending far more time talking with others to make things happen or lubricate the gears of industry.

The odd thing about this is that I'm reasonably good at it. Over the years I've been able to emulate a lot of positive and constructive behaviors that would not otherwise come naturally to me. For example, right out of college, I went into hardcore unannounced door-to-door sales mode selling myself to get a radio job. I got the idea from reading about work in sales, and figured I could just apply it to selling me. This strategy worked, and I got a job. The end of those days I would be completely fried and sleep most of the next day. I know now that autism likely played a role in that fatigue, as it takes a lot of energy to emulate a behavior you don't enjoy or typically feel comfortable with. I also know that most neurotypical people have filters and adherence to social contracts to know you "shouldn't" show up at offices without an appointment.

Conforming to the expectations of the rest of the world is often described as a coping skill for people with autism spectrum disorder. We have to be less weird, and engage in customs and social contracts that may seem arbitrary or pointless. Everyone learns to do this, obviously, but there is a larger cognitive cost to do it with ASD. I started learning about this some years ago in following Simon's journey, but even then it sounded so familiar to me that I was sure that it applied to me. I would even talk about it with some people. But it was after my diagnosis 15 months ago that I felt like I had permission to sincerely explore how it all has affected me my entire life. Things made more sense.

I'm not looking for exceptions or accommodations at this stage of my life. I can self-regulate behaviors for the most part. I'm not always perfect about it, but I imagine I get along as well as anyone. But what I do want from people who get to know me is just a little empathy to understand that sometimes I'm spent. I need to disengage because I don't have anything left. It isn't personal. It's another aspect of the brain that is separate from intelligence.

This first week of the year involved a lot of change, new employees, new plans and such. I'm not overwhelmed in the moment, but when I unplug, it's like a long exhale after holding my breath for days. My hope is that now that I understand this phenomenon, I can figure out ways to lessen the impact.

Team Canon for life

posted by Jeff | Saturday, January 7, 2023, 12:27 PM | comments: 0My first "real" camera was a Nikon F, as in, Vietnam War era SLR film camera. My dad loaned it to me in high school for yearbook, then again in college for my photography class. I really learned exposure theory because of that manual beast.

After that, I bought another Nikon my senior year from a camera shop in my college town. Purchase regret caused me to return it. I also found the usability, the human factors, to be a step back from the old camera that was older than me.

Some years later I bought the Canon Elan IIe, based on reviews from that new Internet. The best thing was that it did some limited eye tracking for focus. But it grounded me in the Canon system, a decision that lasted well into the DSLR and video transition. The important part was the EF lens system, for which I still have amazing lenses. When it came time to buy a cinema camera, I initially bought a C200, then traded laterally to the C70 and an EF to RF speed booster, so I could use the my old lenses with the new lens system. I later bought an R6 mirrorless camera body, also using RF lenses, for stills.

This was a great decision. Of course the glass quality is amazing, but the big story is the updates to the C70. This is very much an experiment for Canon, because they took a lot of the guts from one of their "normal" cinema cameras, the C300 Mark III, and in many ways repackaged them into this DSLR-like box. The history is that they would often cripple the lower model so as not to compete, but this one has only become more capable with updates. They added 12-bit raw recording, which means you can get video that's a lot easier to fix if you got the exposure wrong. It already used great 10-bit 4:2:2 recording formats. They added time lapse recording, which was oddly absent in the first place. Last month they added eye tracking focus, a great feature that you find on other cameras. It isn't the champion of auto focus, but it's pretty great. It's an insanely capable camera for what it costs.

I've really admired some of the other cameras out there, especially from Panasonic, which I have a lot of experience with, and Sony, with which I do not, but I'm definitely Team Canon. The L lenses are not cheap, but they're the starting point for really great images, moving and still. I don't use them enough.

PC upgrades? What are those?

posted by Jeff | Tuesday, January 3, 2023, 10:07 PM | comments: 0It's hard to believe that it has been three and a half years since I built my desktop computer. It was kind of a surprise that I built any computer. Shortly after building it, I did add a second monitor and a second SSD, but it has otherwise not changed.

That's not interesting in the context of computers today, as we've been able to get as much as eight years out of computers around here. CPU capability, memory requirements and drive space finally exceeded the growth needs of regular people. That's different from the old days where I continually upgraded my computer, at least one significant component every year. I don't know if I needed to keep upgrading, but it felt like I should.

I'm at a point now where I am running up against one constraint, and that's disk space. I haven't made that much video in the last few years, but that 4K stuff adds up fast. I probably need to invest in a 4TB drive, which I imagine will be good enough for another few years at least.

I have to admit to thinking about new speakers, too. I've had the same Altec Lansing speakers, with a subwoofer, since 1997-ish. They were a gift from Stephanie when she was still working at CompUSA in grad school. They still work OK, but they're a little noisy. They've been near three lightning strikes. That's straight up a want, not a need.

What's remarkable is that I definitely don't need a new video card. That was the biggest money suck back in the day, as there were new cards every few months with incremental improvements. I'm not gaming much these days, but even still, I can't see much difference beyond 60 fps.

There is some calculus around using parts to build Simon a computer, against my better judgment (peeling him off the machine is hard). But certainly he can use a slightly used hard drive, and maybe even the CPU and motherboard, given how little the new generations matter.

I do most development on my laptop, I guess because sitting at my desk would feel like work. It's not the fastest thing, but the thin-ass laptops I like are not quite desktop strength. But video work definitely benefits from some muscle. A lot of that even is handled by the GPU, which as I said hasn't significantly been out-classed yet. All this to say it's really only video that demands a lot of computational power.

I want to be disappointed that a solid state, 4TB drive is almost $300, but I'm so fucking old that I remember spending that for a single gigabyte. 4000x is a crazy multiplier.

About that new year

posted by Jeff | Sunday, January 1, 2023, 8:45 PM | comments: 0"I'm gonna be a new level of awesome this year!"

Declarations like this are pretty common when the new year rolls around. I never really thought much about them outside of the fact that it's usually not more than a passing sentiment that lasts for a few weeks. Thinking a little more about it though, it feels like we're culturally wired to assess ourselves in a mostly negative way and declare that we must do better. This doesn't sit well with me.

Continuous self-improvement is admirable, and I imagine generally happens as a function of aging. I'm sure some people can find creative ways to measure the improvement, sure, but that's not what I'm getting at. I'm talking about the feeling that we weren't good enough in the prior year. That seems like the underlying sentiment behind these annual declarations. The cultural phenomenon subtly allows us to lessen ourselves in the name of a passing year.

I think that's crappy. We should be giving ourselves the space and understanding that we did what we could with that time. Whatever we could not do doesn't matter, because we're not going to get that time back anyway. It's better to declare that you were as awesome as you could be, and that's enough to make you a valuable human. Never feel that you were less than that. Celebrate who you are, not what you could have been.